Conditional Deep Unrolling for LiDAR Super-Resolution and Improved Segmentation

We recently shared a new publication in IEEE Transactions on Artificial Intelligence presenting an end-to-end approach for LiDAR super-resolution and improved segmentation. The work focuses on a practical challenge in autonomous mobility: how to improve perception when vehicles rely on lower-cost LiDAR sensors that produce sparse data.

The main idea is to move beyond treating LiDAR enhancement as a separate preprocessing step. Instead, the proposed framework connects data enhancement directly with the perception task, helping the system reconstruct the scene in a way that is more useful for semantic understanding.

What was Developed?

The paper introduces a conditional deep unrolling framework for LiDAR super-resolution. In simple terms, the method uses semantic cues extracted from the low-resolution input to guide the reconstruction process toward regions that matter most for perception. This makes the super-resolution stage more task-aware and better aligned with the needs of downstream segmentation.

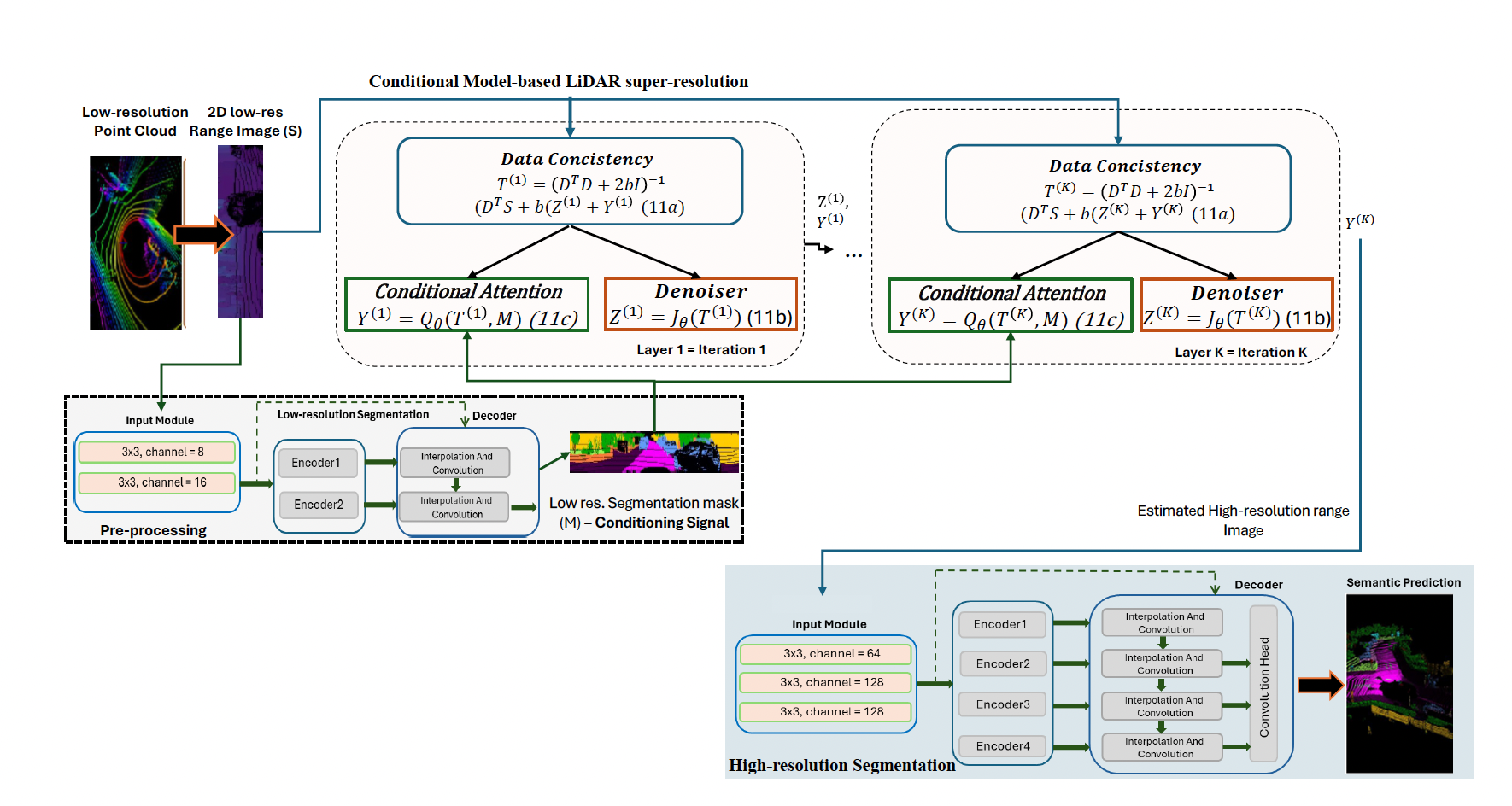

The proposed architecture combines:

- A data-consistency step that keeps reconstruction aligned with the measured LiDAR signal.

- A learnable denoising module that helps restore realistic structure and suppress artifacts.

- A conditional attention mechanism that injects semantic guidance into the reconstruction process.

- An end-to-end integration with a high-resolution segmentation network.

Rather than relying on a heavy reconstruction model followed by separate processing, the framework is designed as a compact and efficient pipeline suitable for real deployment.

The proposed pipeline combines conditional LiDAR super-resolution with high-resolution semantic segmentation in a unified architecture.

What was Achieved?

The method was evaluated on public benchmarks and also tested in a real-world deployment setting. The results showed that the framework can enhance sparse LiDAR input while supporting more reliable semantic segmentation, particularly in conditions where low-resolution sensing would normally limit perception quality

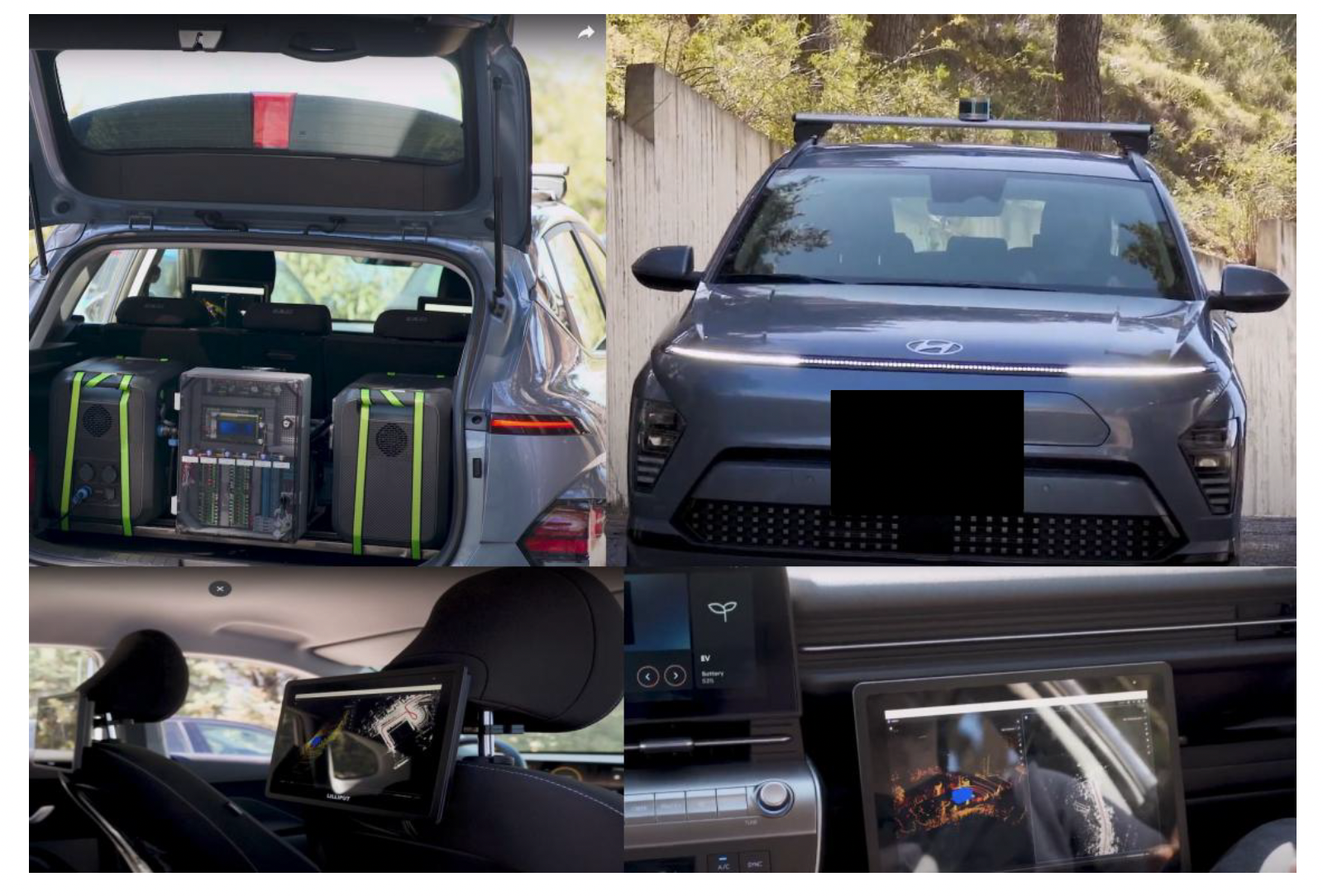

An important part of the work is that the framework was also deployed on a demo vehicle equipped with an NVIDIA Jetson Orin and a 16-channel LiDAR sensor,showing that the approach is not only effective in offline experiments but also feasible in embedded automotive settings.

Why it Matters for AutoTRUST?

For AutoTRUST, this work is relevant because it supports the development of more efficient, deployable, and trustworthy perception systems for autonomous mobility. In practice, trustworthy autonomy does not depend only on accuracy, but also on whether a perception method can run reliably on real hardware and under realistic sensing constraints.

By improving the usefulness of low-cost LiDAR data and linking enhancement directly to the perception objective, this work contributes to more practical and scalable solutions for real-world autonomous vehicle operation.

Real-world deployment of the proposed framework on the AutoTRUST demo vehicle platform.